7 min. read

An Inside Look at Passbolt’s First Hackathon

Passbolt had their first ever hackathon. For three epic days, seven teams battled it out, but only one emerged as the ultimate champion.

In today’s digital landscape, data leaks and security breaches have become all too common. As a result, organisations are realising the importance of incorporating security throughout the software development process. If you’re a developer working on your latest masterpiece, a well-built castle that will revolutionise the world you’ll need to make sure your security stands the test of time. So, let’s go on a quest to explore the importance of a secure development lifecycle. And let’s look at some of the mistakes developers unwittingly make.

If everything you develop is a castle, a secure development lifecycle is a moat filled with acid-spitting, man-eating alligators. It’s not just a fancy term or a buzzword we throw around. It’s a strategy for proactively identifying and eliminating vulnerabilities throughout your software development process. In technical terms, however, a secure development lifecycle refers to the integration of security practices throughout the entire software development process.

The aim is to identify and mitigate vulnerabilities in a proactive manner. From design to development, it’s important to have risk and threat management in place at every stage. By embracing an SDL, you’re not only taking steps to improve the security posture of your application, but you’re also helping your organisation build trust with customers and protect sensitive information.

Incorrectly configuring JSON Web Tokens (JWTs) can lead to a critical security vulnerability known as Cross-JWT confusion. This occurs when the misconfigured token validation process causes different JWTs with distinct purposes, scopes, or access levels to be interchanged accidentally. As a result, an attacker can manipulate the system by replacing one valid token with a different one.

This can allow unauthorised access to your resources, bypassing the intended authorisation and authentication. To prevent Cross-JWT Confusion, it’s vital to configure and validate JTWs based on their intended purpose to ensure that they can’t be inadvertently interchanged.

At passbolt, we experienced a similar vulnerability related to a JWT mistake in November 2021 and it was caught by Cure53. The token validation process didn’t distinguish between different JWTs with distinct purposes. If exploited the vulnerability could have allowed an attacker to gain access to resources and bypass authorization and authentication methods. Once discovering the issue we took action to fix it. The vulnerability was fixed by enhancing the token validation process, ensuring that JWTs are properly configured and validated. Want to know more about this incident? You can read all about it in the reports on the website.

Different recipient attacks can be a big risk when it comes to JWTs. These attacks can happen when an attacker sends a token intended for one recipient to a different recipient (hence the name). Let’s consider an example where a token for a third-party service is issued by an authorisation server. The token or signed JWT will include claims for subject and admin (sub and role) but lacks an intended recipient or issuer. If another API that’s not related to the intended recipient relies solely on the signature of validation you run into a different recipient vulnerability.

You can prevent different recipient attacks by enhancing token validation beyond relying only on the signature. Implementing additional security measures such as unique per-service keys or secrets, and using specific claims like the audience (aud) claim. By including and validating the audience claim, you specify the intended audience, and even if the signature is valid, the token cannot be used on other services that don’t share the same audience claim.This adds reinforcement to the security of the system and prevents unauthorized access to different recipients.

Making the mistake of employing vulnerable signature algorithms or inadequate key strengths when generating or verifying JWTs can be a big deal. Attackers can tamper with the content in the token without invalidating the signature, this can provide them with access or escalate their privileges.

Weak JWT signatures can also be exploited to forge valid JWTs, allowing a threat actor to impersonate trusted entities in order to gain access to sensitive data. A weak signature also increases the likelihood of the signing key being compromised which can lead to leaking of data. To prevent the risk, it’s essential to pair your JWT with strong signature algorithms (Such as RSA-SHA256 or ECDSA-SHA256) and make sure they’re a sufficient key length.

When implementing JSON Web Tokens (JWT), another common mistake is to store them in local storage. This introduces inherent vulnerabilities as it’s susceptible to cross-site scripting (XSS) attacks. Malicious scripts injected into the web application can access the local storage and extract the JWT, potentially allowing further access and compromising user accounts.

Rather than relying solely on local storage, developers should prioritise secure storage mechanisms for tokens, such as encrypted cookies or server-side sessions, to address these challenges. With the adoption of these alternatives, the risks associated with XSS attacks and token exposure can be significantly mitigated.

Mistakes can be made, even by experienced developers. One of the most common mistakes is the incorrect implementation of OAuth. Even with the guidance of the RFC, it’s all too common to run into challenges during the implementation process. A typical mistake is insecure token storage, where developers fail to use proper encryption and protection mechanisms. This can result in sensitive access tokens being exposed, opening the door to unauthorised access or data breaches. Mishandling permissions is another common blunder, where inadequate validation and verification processes can result in unintended parties being granted excessive privileges, leading to compromised security and integrity.

Failure to properly implement OAuth leaves you vulnerable to cross-site request forgery (CSRF), which allows attackers to manipulate user sessions, potentially leading to unauthorised actions and data exposure. You could also make the mistake of failing to properly validate tokens, which undermines how you implement OAuth. Poorly validated tokens allow attackers to forge or manipulate tokens after security measures have been bypassed.

Broken Object Level Authorisation (BOLA) is the rock star of the OWASP vulnerability chart. The use and enforcement of object level authorisation helps to determine who has access to certain resources or objects. For example, in an application that uses access control methods to grant read and write privileges to authenticated users, and within the object, specific attributes like “password” should only be available to the owner. BOLA vulnerabilities can allow a malicious user to gain unrestricted access by bypassing the intended restrictions.

Similarly, broken authorisation at the object property level can expose individual fields or properties to manipulation. This can compromise the overall integrity and confidentiality of your application. By reviewing the authorisation logic, analysing the enforcement of permissions and identifying any gaps or weaknesses, you can prevent these vulnerabilities. You can make your application more secure by incorporating role-based access control or attribute-based access control at the property level to provide granular permissions at the object level. Consider using dynamic authorization to enable real-time updates to permissions and ensure that your access controls keep pace with constantly changing permissions.

Failure to restrict file and directory access or traversal can result in a security vulnerability. It’s called a path traversal attack, and allows threat actors to manipulate pathnames and access files and directories outside their intended scope. This could lead to data leakage, the execution of arbitrary code, or system compromise. You should always use input validation and sanitisation techniques. Ensure proper pathname restriction by using only secure APIs and libraries designed for file path operations. And you should use access control mechanisms at the file system level to enforce directory restriction and prevent unauthorised access.

An application is only as secure as the foundation on which it rests. But in today’s fast-paced market, where time to market and delivery of new features are highly valued, security considerations often take a backseat. Security is proactive, not reactive. The whole infrastructure of your application will crumble if the design of your foundation is riddled with vulnerabilities.

Sensitive data can be exposed through weak encryption algorithms, poor validation, lack of secure communications and more. You should always be aware of these dangers and apply the principles of good design. Take the time to carry out threat modelling and build in security controls at EVERY level. Security starts at the beginning of the project — it shouldn’t be an afterthought. Build your software on a rock solid foundation of security.

It’s not quite the same as a robot uprising, but automated threats can still be quite mischievous. Don’t underestimate the cunning tactics of threat actors by not taking automation into account. Automated threats can involve brute forcing, malware propagation, IoT botnets, credential stuffing, running malicious scripts, stealing sensitive data or flooding your systems with malicious traffic.

Take steps to harden your infrastructure with defences like rate limiting, challenge-based authentication, IP filtering, anomaly detection, CAPTCHA challenges, behavioural analytics and more. You can also add a layer of protection against these mechanical adversaries by implementing web application firewalls and deploying threat intelligence solutions. It’s a battle, but with the right armour of security measures in place, your victory will be an effortless one.

Placing your security blindly in the hands of your security control might make you feel invincible, but without understanding the limitations of your countermeasure it can be dangerous. Attackers can exploit gaps and weaknesses that are overlooked, breaching your systems and compromising sensitive information. You should always approach security holistically.

Take time to understand the capabilities and limitations of security controls, take a defence-in-depth strategy, regularly assess and do penetration tests. Security should be a culture of continuous improvement. Continually educate yourself and your team on emerging threats and evolving security practices.

Developers may not be aware of the risks associated with insecure deserialisation. When deserializing data, developers often forget to validate and sanitise the input, opening the drawbridge for malicious entities. Without proper steps, an innocent enough data object can become a powerful exploit that can exploit code or tamper with the applications logic. You should fortify your deserialization routines with proper input validation, integrity checks, and secure deserialization libraries.

When handling user-generated content or displaying data on a website, developers can overlook the importance of input encoding. If you don’t do enough to properly sanitise or validate user input or commands, they can be tampered with, opening the door to cross-site scripting (XSS) vulnerabilities and potential command or SQL injection attacks. By exploiting these vulnerabilities, threat actors can manipulate databases, execute remote code or gain access to sensitive data.

To ensure the security and integrity of your application, it’s critical to thoroughly sanitise and validate all input, effectively neutralising any malicious elements. To reduce the impact of potential attacks, incorporate secure coding practices such as parametric queries, content-aware encoding, prepared statements, and output encoding. The implementation of output encoding can also be helpful in the prevention of cross-site scripting (XSS) attacks.

When it comes to form validation, it’s important not to rely solely on the validation framework. The framework’s rules may be too lenient, leading developers to overestimate its strength. Review and validate the capabilities of your framework to ensure that it is in line with your security requirements.

While cryptography is the guardian of security and integrity, it can also be a double-edged sword when used wrong. The strength of your cryptographic defences can be directly undermined by improper use.

For example, when you don’t update from outdated Data Encryption Standards to stronger algorithms like AER or RSA. Similarly, using a 64-bit key for AES encryption instead of 128-bit or 256-bit keys and using longer key sizes increases the difficulty of the cryptographic attacks. Or when you use a weak random number generator that relies on a predictable system for generating keys. There are so many more potential mistakes when using cryptography such as flawed key management practices, ignoring algorithm vulnerabilities, and lack of training

Avoiding these examples and following proper practices such as employing well-established cryptographic libraries and algorithms that have withstood the test of time is critical. Encryption can be a lot of work, but having a deep understanding of best practices and cryptographic principles can make a world of difference for security.

In the realm of secure development, it’s not enough to implement cryptography alone; you should also take preventive measures for potential cryptographic failures. Even the most robust encryption can fall prey to implementation bugs, side-channel attacks, or the discovery of new vulnerabilities. That’s why it’s very important to be prepared by regularly checking your libraries. Updating your libraries and dependencies as needed ensures you benefit from the latest security patches. The impact of potential cryptography can be lessened by implementing methods such as key rotation, proper key storage, and secure key management.

Let’s use the castle analogy again, if everything you develop is a castle using insecure default configurations is like leaving the drawbridge down and the doors unlocked. Shipping new software can be exciting and cause a lot of oversight when it comes to locking down default settings. Without secure default configurations your application can suffer from unpatched vulnerabilities, weak passwords, and unrestricted access.

To avoid this symphony of misfortune, pay attention to the details. Thoroughly review and validate configuration settings, follow industry best practices, and employ automated tools to detect and remediate misconfigurations. Ensure secure configuration of servers, frameworks, and any components involved. Don’t forget to change default passwords and perform a thorough security assessment before sending software out into the world.

It may not seem like it, but keeping a comprehensive record of your software and hardware assets is critical to security. If you’re not aware of your entire ecosystem, software can go unpatched, dependencies can be forgotten, and components can become obsolete — all waiting to be exploited. Inventory management helps keep this at bay. You can even automate it with asset discovery tools and set reminders to update the inventory. Performing regular vulnerability assessments is easier when you know what to check.

Threat modelling is truly an art, when developers transform into strategic masterminds, telling the future and seeing all the devious ways their creations can be exploited. Yet, it’s still a commonly neglected step. And developers miss out on the opportunity to identify potential weaknesses, assess risks, and find countermeasures. Few things feel better than stopping an attack before it even happens and being one step ahead.

Leave the vintage goods for the antique roadshow and update your components. You shouldn’t be nostalgic for an outdated, vulnerable component. Stop providing ample opportunities for attackers. Prioritise keeping an eye out for updates, following security advisories, and using automated dependency scanning tools. There are plenty of tools, some include SonarQube, Nessus, Snyk, Dependabot, and GitLab Storm. Always make sure your codebase is using the latest and most secure versions of its components. It’s important to use libraries that have active maintenance and security support to avoid a weak link in your applications armour.

Our cautionary tale, we at passbolt remember in May 2018, when we used a flawed random number generator, while there was a low possibility for exploitation it’s still a vulnerability and we’re thankful it was caught! To fix the mistake, it involved rewriting the portion of the password generator to ensure the secure random number generator (RNG) was cryptographically secure. The replacement RNG has been well-vetted and uses a strong cryptographic randomness. You can learn more in the incident report on the website.

It’s far too common that fellow developers believe that “security by obscurity” is enough to keep their development environment from attackers. When even without a domain, threat actors can find your development environment. You should always treat your development domains as if they were exposed to the world, you never know when the veil of obscurity might be lifted. Security should never rely on hiding, but in creating robust defences. Let’s use strong authentication mechanisms, secure access controls, proper network segmentation, and thorough vulnerability scanning even when creating and using development environments.

Security can be severely compromised if error messages are poorly implemented, or if your error messages are a leak of sensitive information. Attackers can gain valuable insight into the system and potentially find vulnerabilities from the information leaked in error messages. Error messages should be general in nature, but detailed enough to be useful. They shouldn’t reveal any specific information about the underlying system in order to prevent misuse.

A common mistake is when the database (DB) ID is included in error messages. The inadvertent inclusion of DB IDs in error messages can give potential attackers information about the structure and organisation of your database. This can allow threat actors to continue to exploit the system. It’s important to ensure that error messages do not reveal sensitive or internal identifiers, such as database IDs.

Logging error information should be implemented securely. Appropriate access controls and encryption methods should be used to prevent exploitation. You can protect user and application confidentiality and prevent data leakage by implementing robust error handling.

We weren’t all born Sherlock Holmes and that’s fine. The world needs detectives and the world needs developers. There’s no need to be both, especially at the risk of your incident detection and response. Without comprehensive logging mechanisms, it’s a challenge to identify and investigate security incidents or suspicious activities. Establishing a structured logging framework can help capture relevant security events, including authentication attempts, access control violations, and potential indicators of compromise.

You should store these logs securely for an appropriate duration to help with forensic analysis and incident response. And implementing live monitoring and alerting tools allow for even more proactive detection. Good logging and monitoring not only saves you work and time, it also minimises the impact of incidents and improves the security posture of your application.

Logs can be an absolute treasure trove of information, but you should take precautions to avoid log forgery. Attackers can manage to slip their code into your logs, they can manipulate the entries, masking their malicious activities, or erasing their traces entirely. It creates a nightmare for developers and security teams to investigate. To ward off log injection, use proper input sanitization techniques to cleanse your log entries from any potential exploitation. You can bolster the strength of your logs by using mechanisms for entry validation. With proper steps in place, you can keep your logs clean.

When sensitive data like passwords, personally identifiable information, or cryptographic keys live in the memory, implementing secure management techniques is invaluable. Failing to implement adequate safeguards can expose sensitive information to unauthorised access or manipulation. What does proper memory management involve? Minimising the lifespan of sensitive data in memory, memory allocation and deallocation, securely cleaning memory after use to prevent residual information, and using memory protection mechanisms that are offered by the underlying OS or programming language. You should also be cautious about side-channel attacks that can access sensitive data by exploiting variations in memory access patterns or timing.

Accessing memory after it’s been freed can lead to unpredictable behaviour and potential security risks. Hence the name “use after free” for this type of vulnerability. Use after free vulnerabilities are a bit like raising the dead, and let’s agree, it’s better to avoid both of them. In order to circumvent this vulnerability, you should be careful with memory management or use memory safe languages. And ensure that objects are deallocated correctly so they’re not accessed afterward.

This is the dragon of our quest. It devours everything in its path, leaving nothing but chaos and instability in its wake. If a system is not able to effectively manage and allocate its resources, this can lead to excessive consumption and a degradation of performance. Unchecked resource consumption can lead to crashes, non-responsiveness, denial-of-service attacks, system instability, scalability problems, side-channel attacks and reputation damage. Attackers can deliberately trigger operations to overwhelm the system and cause it to fail.

The exhaustion of resources can lead to major vulnerabilities that allow attackers to analyse variations in the use of resources in order to infer critical information such as cryptographic keys, user activity or sensitive data. You can tame this ravenous beast by implementing proper resource management. You should set resource limits and thresholds, implement intelligent caching, optimise algorithms and ensure data structures are minimised to avoid unnecessary consumption. It’s also a good idea to use monitoring and alerting systems to identify patterns of resource misuse.

Without restrictions on your operations, buffer overflow and out-of-bounds access can run free and lead to vulnerabilities or crashes. To prevent this, you should enforce strict checks and limitations to make sure all operations stay within the allocated memory. Be the gatekeeper of memory access, diligently validating and sanitising memory addresses before performing read or write operations. Ensure that you’re targeting the intended memory buffers and not inadvertently tampering with critical program data or other variables.

A case of rose-tinted glasses with dependent packages? When you stumble upon a gem of a package, it’s natural to be entranced by its lure. Your code may be a masterpiece, crafted with the utmost precision and care. But security vulnerabilities may lie in your dependent packages and vulnerabilities are often hidden in the very dependencies that your dependencies rely on. Take Color.js, a popular package that has the potential to unleash a security conundrum on your codebase. With Color.js, the vulnerabilities were intentionally committed by their author to trigger loops causing a denial of service. In other cases it’s not uncommon for packages to unwittingly harbour unpatched vulnerabilities.

It’s like a highly skilled swordsman wielding a flimsy blade. Especially when the vulnerability is in a system-level package like openssl. To prevent it you should keep a close eye on vulnerability reports, quickly applying updates and patches, and conducting thorough security audits. Keep in mind that a chain is only as strong as the weakest link in it. When choosing dependencies, be discerning and look for those with a proven track record, a robust security posture and a responsive development community.

Avoid endless chains of dependencies, because simplicity is the key to resilience.In this world of code, my fellow warrior, the strength of your defences lies in your ability to see beyond the lure of external packages. The strength of your defences lies in knowing how to look beyond what external packages offer. By monitoring the security of your dependent packages on a regular basis and taking proactive measures, you can ensure that your code remains free of vulnerabilities.

The practice of using code from other developers is a time honoured tradition and passbolt is all for the ethical sharing of knowledge. But, without proper validation using code from other developers can throw a wrench into your secure development lifecycle. It’s somewhat like letting strange women lying in ponds giving out swords decide your government. There may be a lot of unknowns that leave you vulnerable to potential consequences. Take time to analyse the code for vulnerabilities, review its functionality, and verify it’s compatible with your system. It may be less convenient, but it minimises the risks and ensures a solid foundation for your project.

Secure authentication and authorisation checks on API endpoints can often be overlooked, allowing attackers to escalate privileges or access unauthorised resources. To prevent such breaches, it’s important to use robust authentication and authorization methods within your API endpoints. You should also employ secure token handling, strict access controls, and thoroughly validate user input. Fortifying your API endpoints ensures that only authorised users can access sensitive data, thwarting any attempts at privilege escalation.

Consider a scenario where a user authentication system exists and certain endpoints are meant to only be accessible to authorised users. But because of an oversight it’s misconfigured and these endpoints are visible to all users. For instance, an e-commerce platform that uses the endpoint “/admin/deleteProduct” for deleting products from a catalogue. If this endpoint is revealed to unauthorised users, it can be targeted for exploitation. This vulnerability can lead to significant damage to the system such as the removal of essential products, manipulation of inventory, or a disruption of operations.

Attackers can use 401 or 403 status codes to understand what endpoints exist, allowing them to further explore and exploit the system. You should carefully consider the choice of HTTP status codes and the information disclosed in error messages. Instead of 401 or 403 status codes, a more secure approach would be to respond with a generic 404 (Not Found) status code, without explicitly confirming or denying the existence of the requested resource. This alternative enhances security by concealing the existence of restricted endpoints from unauthorised users, making it harder for attackers to identify potential targets.

APIs can be a lucrative entry point for threat actors that are seeking to exploit your application. APIs act as gateways to our systems, allowing authorised users to access resources and perform actions. However, a single unprotected endpoint can become the weak link in our armour, providing an open invitation to attackers who are always on the lookout for weak spots. They can exploit these vulnerabilities to bypass authentication, inject malicious code, or gain unauthorised access to sensitive data. Conducting security audits to identify and protect your API provides a shield against attackers. You should examine each API endpoint for unprotected areas and raise your defences by addressing them.

SSRF is a cunning and elusive vulnerability that turns your server into an accomplice in the hands of an attacker. SSRF involves an attacker tricking servers into making unintended requests to internal resources or external endpoints that are under their control. The attacker will trick the server into fetching sensitive data or executing actions that should be off-limits. To prevent SSRF, you need to strengthen your servers defences with input validation. Each server request should be exempt to make sure the target destination is legitimate and authorised.

You should also be vigilant for any indirect requests made by your server, such as DNS lookups or HTTP redirects. Attackers may exploit these to create SSRF attacks. With a strict boundary between internal and external resources, you minimise the risks. SSRF attacks can be intricate, but taking the time now to take appropriate measures can save you time, money, and a lot of work later.

Now that you’re armed with knowledge, it’s time to fix your metaphorical armour and wield your keyboards with the utmost caution. Remember that a secure development lifecycle is the shield of the development process, keeping your creations safe from harm. By identifying these mistakes and proactively researching you’re already taking a big step in the quest for a secure development lifecycle. Each stage from design to deployment presents an opportunity to create a better, more secure application.

The quest continues with more information about secure development around the corner, but we want to hear about any challenges you’ve encountered. Want more information or to offer feedback? Visit passbolt at the community.

7 min. read

Passbolt had their first ever hackathon. For three epic days, seven teams battled it out, but only one emerged as the ultimate champion.

3 min. read

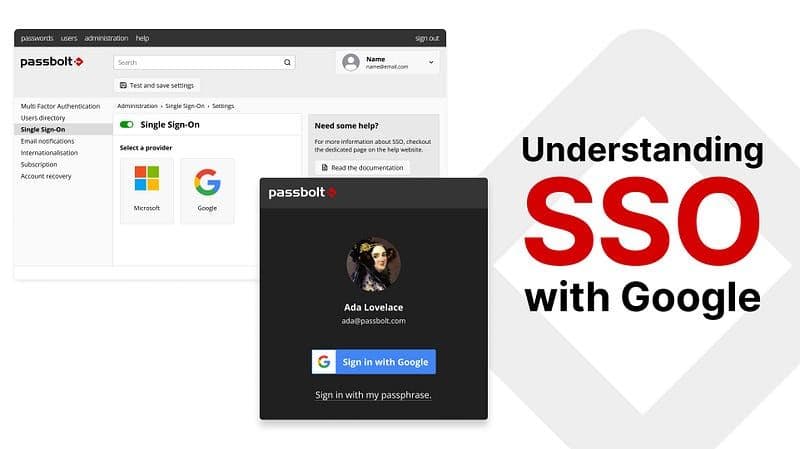

With the power of passbolt and Google SSO, you can use your existing Google credentials to log into passbolt.